To live and lie in LA: the science of lying

In recent memory I can’t remember wanting and needing to pay so much attention to the details of a game as I do LA Noire. During interviews and interrogation sequences I stare daggers into the characters on screen. Still, I make mistakes — select doubt when it should have been truth, because I think I see a diverted gaze… I select truth when I should have caught a lie because I missed a curl at the edge of a liar’s mouth. I begin to second guess my ability to read faces, but I restart and try again. This has led me to become quite critical of the game’s main mechanic, and while it’s implementation is solid for a initial effort, I wonder how well the game accurately simulates the mannerisms human expression (lying in particular).

Micro-Expressions

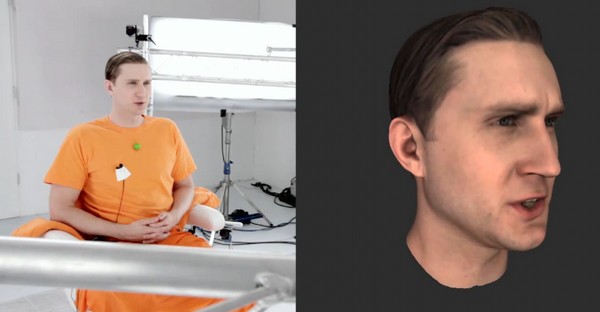

Anyone that has seen the production videos for LA Noire knows that painstaking effort was taken to capture the micro-expressions of hundreds of actors, which were then faithfully recreated in the game. If you’ve ever studied the work of Paul Ekman, or watched a couple of episodes of Lie to Me (based on Ekman), you know that micro-expressions occur at a reactionary level, faster than our conscious minds can control. Our faces betray us when we lie, but also reinforce us when we tell the truth. It is not so much lying as it is a unconscious expression of emotion. Interestingly Ekman confirmed that certain expressions are universal; meaning that tribes of people who have no contact with the modern world both understand and form the same expressions to convey certain emotions. He identifies seven universal emotions – anger, fear, disgust, contempt, surprise, sadness, and happiness. These expressions can occur very quickly, in the moment, and can occur in reaction to external stimuli.

And that’s part of what makes LA Noire confusing. We only see the face of the person we interview when they are speaking, and we also see a prolonged reaction to their own statement while we make our determination of the statement. It is in those moments after the statement is made that we most likely see fear, or contempt, maybe even pride, and that’s what we use to determine Truth or Doubt. This distinction is much more difficult than the Lie condition, which is contingent upon having a piece of evidence — but isn’t it also possible to know when someone is lying even without evidence?

In what would make the game infinitely more difficult to play and design, the player should be able to see the face of the interviewee as you are speaking to them, and much more emphasis should be given to the expressions they make when they provide a statement, rather than the shifty-eyed gaze that occurs while you are selecting. I realize now, because of a conversation with NA guest, Ryan, how hard this might be for the player, because LA Noire does very little in the way of providing practice with feedback for lie detection. There is an intro mission, but no real learning opportunities with feedback for failures — feedback is the most critical element of learning with practice. You know that you get things wrong, but you never know why.

What about other signals?

I also wonder how much attention was given in the game to other forms of communication. Lie detection is not restricted to micro-expressions. The language we use can also reveal our meaning — as in “I did not have sex with that woman.” The phrase ‘that woman’ is used (as opposed to ‘her’ or ‘Miss Lewinsky’) to create distance between people. When we lie or get into trouble, we use language like this that we wouldn’t use in our everyday conversations. If we make up a story we know is not true, we often cannot repeat it event for event; or better yet we would not be able to repeat those events in reverse order. I wonder if the writing of LA Noire fully accounts for these nuances — although I do not remember any of these contextual clues jumping out after playing about 1/2 of the game so far.

Body language is also very relevant to this issue. When we try to remember things we tend to stare off into empty space, as if to retreat into our brains to recall events. A liar might stare directly at another person, because the lie they tell does not come from a memory. They might divert their gaze after the fact because the confidence cannot be maintained. When a lie is told we also tend to unconsciously cover our mouths, or pull on our ear lobes. Humans unconsciously detect other emotions from body language as well — a puffed chest, a clenched fist, crossed arms, or sweat on the brow all have potential meaning.

A combination of communication signals

Because of the emphasis on facial expression capture in LA Noire, I do not know whether or not these other clues were woven into the fabric of the game. However, lying or not, communication between individuals is not just localized to speech, or even facial expression, but a combination of many factors including body posture and social distancing.

Sometimes however… a cigar is just a cigar.

I don’t mean to knock LA Noire in any way, it’s a great display of technology. In 10+ hours I never once had an Uncanny Valley moment. However, I feel that sometimes the NPCs give mixed subconscious signals (e.g. mismatched word selection and body language) — because they are not actual human beings. I assume this is why is is often so difficult to decide between Truth and Doubt. However, the effort put forth to create this type of realism in LA Noire makes me excited for what the future of games like these might hold.

Looking toward the future

I believe the next big innovation in gaming is not 3D, not even gesture based first person shooters, rather the implementation of near-real human expression in our avatars and characters. We’ve seen how sophisticated human modeling technology has been used in Heavy Rain, and at the micro-level of LA Noire, but this is just the tip of the iceberg. Perhaps someday technology will allow us to catalog a database of human expression (like FACS) that can be accurately implemented without the need to capture performances from actors. If this is possible, then these expressions can be trigged on the fly, which could lead to richer, branching story experiences. I’m not gonna lie… it’s all very exciting stuff and I can’t wait to see what comes next in this vein of story-based gameplay.

More on Paul Ekman Micro-Expressions

This Post Has 5 Comments

Comments are closed.

Excellent article man! I never knew you were so interested in lie-detection. Sadly, I’ve never seen an episode of Lie to Me.

Sadly I think Lie to Me was cancelled. But the show was based on the work of Ekman — I think episodes are available on hulu or netflix at this point. EDIT (Season 1 & 2 on netflix instant watch)

Question: will reading this article make me any better at interrogations?

I’m starting this game now and I am new to microexpressions, lie detection, body language, etc, but I have been studying them all on the side for a few months now, very casually. The reason I got into this stuff is because without prior training I tested very high on discerning microexpressions, and as I took the test in other places I kept getting the same unusually high scores. But it still really doesn’t mean much to me. And now here I am, still confused as hell as I play this game, and my supposed skill doesn’t help me at all. Did you ever find out if Rockstar ever did any of the things you were wondering about in your blog entry here?

To answer your question directly, I don’t know for certain how / if Rockstar intentionally put this into the game. Keep in mind that some of this is dependent upon the actors producing the right responses for the motion capture. Overall, I don’t think the acting was the confound here.

After writing this I did complete the game; looking back, I definitely did try to read the expressions during the beginning of the game and was successful. However, the dialog becomes a bit more complicated since Lie and Doubt are really the same thing with the difference of needing evidence. There were times I knew someone was lying, but without evidence “doubt” would be the “wrong answer.” Eventually, I displaced the microexpression strategy, instead just getting a better handle on the game mechanic as a whole (evidence, game flow, using the “help” when needed).

So I guess in the end, the discerning skill will only get you so far in this particular game, even though I believe an effort was made capture some of that photo-realism in the actors.